- Research Article

- Open access

- Published:

Global Stability Analysis for Periodic Solution in Discontinuous Neural Networks with Nonlinear Growth Activations

Advances in Difference Equations volume 2009, Article number: 798685 (2009)

Abstract

This paper considers a new class of additive neural networks where the neuron activations are modelled by discontinuous functions with nonlinear growth. By Leray-Schauder alternative theorem in differential inclusion theory, matrix theory, and generalized Lyapunov approach, a general result is derived which ensures the existence and global asymptotical stability of a unique periodic solution for such neural networks. The obtained results can be applied to neural networks with a broad range of activation functions assuming neither boundedness nor monotonicity, and also show that Forti's conjecture for discontinuous neural networks with nonlinear growth activations is true.

1. Introduction

The stability of neural networks, which includes the stability of periodic solution and the stability of equilibrium point, has been extensively studied by many authors so far; see, for example, [1–15]. In [1–4], the authors investigated the stability of periodic solutions of neural networks with or without time delays, where the assumptions on neuron activation functions include Lipschitz conditions, bounded and/or monotonic increasing property. Recently, in [13–15], the authors discussed global stability of the equilibrium points for the neural networks with discontinuous neuron activations. Particularly, in [14], Forti conjectures that all solutions of neural networks with discontinuous neuron activations converge to an asymptotically stable limit cycle whenever the neuron inputs are periodic functions. As far as we know, there are only works of Wu in [5, 7] and Papini and Taddei in [9] dealing with this conjecture. However, the activation functions are required to be monotonic in [5, 7, 9] and to be bounded in [5, 7].

In this paper, without assumptions of the boundedness and the monotonicity of the activation functions, by the Leray-Schauder alternative theorem in differential inclusion theory and some new analysis techniques, we study the existence of periodic solution for discontinuous neural networks with nonlinear growth activations. By constructing suitable Lyapunov functions we give a general condition on the global asymptotical stability of periodic solution. The results obtained in this paper show that Forti's conjecture in [14] for discontinuous neural networks with nonlinear growth activations is true.

For later discussion, we introduce the following notations.

Let  , where the prime means the transpose. By

, where the prime means the transpose. By  (resp.,

(resp.,  ) we mean that

) we mean that  (resp.,

(resp.,  ) for all

) for all  .

.  denotes the Euclidean norm of

denotes the Euclidean norm of  .

.  denotes the inner product.

denotes the inner product.  denotes 2-norm of matrix

denotes 2-norm of matrix  , that is,

, that is,  , where

, where  denotes the spectral radius of

denotes the spectral radius of  .

.

Given a set  , by

, by  we denote the closure of the convex hull of

we denote the closure of the convex hull of  , and

, and  denotes the collection of all nonempty, closed, and convex subsets of

denotes the collection of all nonempty, closed, and convex subsets of  . Let

. Let  be a Banach space, and

be a Banach space, and  denotes the norm of

denotes the norm of  ,

,  . By

. By  we denote the Banach space of the Lebesgue integrable functions

we denote the Banach space of the Lebesgue integrable functions  :

:  equipped with the norm

equipped with the norm  . Let

. Let  be a locally Lipschitz continuous function. Clarke's generalized gradient [16] of

be a locally Lipschitz continuous function. Clarke's generalized gradient [16] of  at

at  is defined by

is defined by

where  is the set of Lebesgue measure zero where

is the set of Lebesgue measure zero where  does not exist, and

does not exist, and  is an arbitrary set with measure zero.

is an arbitrary set with measure zero.

The rest of this paper is organized as follows. Section 2 develops a discontinuous neural network model with nonlinear growth activations, and some preliminaries also are given. Section 3 presents the proof on the existence of periodic solution. Section 4 discusses global asymptotical stability of the neural network. Illustrative examples are provided to show the effectiveness of the obtained results in Section 5.

2. Model Description and Preliminaries

The model we consider in the present paper is the neural networks modeled by the differential equation

where  is the vector of neuron states at time

is the vector of neuron states at time  ;

;  is an

is an  matrix representing the neuron inhibition;

matrix representing the neuron inhibition;  is an

is an  neuron interconnection matrix;

neuron interconnection matrix;  ,

,  , represents the neuron input-output activation and

, represents the neuron input-output activation and  is the continuous

is the continuous  -periodic vector function denoting neuron inputs.

-periodic vector function denoting neuron inputs.

Throughout the paper, we assume that

:

:  has only a finite number of discontinuity points in every compact set of

has only a finite number of discontinuity points in every compact set of  . Moreover, there exist finite right limit

. Moreover, there exist finite right limit  and left limit

and left limit  at discontinuity point

at discontinuity point  .

.

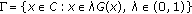

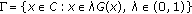

has the nonlinear growth property, that is, for all

has the nonlinear growth property, that is, for all

where  ,

,  are constants, and

are constants, and  .

.

:

:  for all

for all  , where

, where  is a constant.

is a constant.

Under the assumption  ,

,  is undefined at the points where

is undefined at the points where  is discontinuous. Equation (2.1) is a differential equation with a discontinuous right-hand side. For (2.1), we adopt the following definition of the solution in the sense of Filippov [17] in this paper.

is discontinuous. Equation (2.1) is a differential equation with a discontinuous right-hand side. For (2.1), we adopt the following definition of the solution in the sense of Filippov [17] in this paper.

Definition 2.1.

Under the assumption  , a solution of (2.1) on an interval

, a solution of (2.1) on an interval  with the initial value

with the initial value  is an absolutely continuous function satisfying

is an absolutely continuous function satisfying

It is easy to see that  :

:  is an upper semicontinuous set-valued map with nonempty compact convex values; hence, it is measurable [18]. By the measurable selection theorem [19], if

is an upper semicontinuous set-valued map with nonempty compact convex values; hence, it is measurable [18]. By the measurable selection theorem [19], if  is a solution of (2.1), then there exists a measurable function

is a solution of (2.1), then there exists a measurable function  such that

such that

Consider the following differential inclusion problem

It easily follows that if  is a solution of (2.5), then

is a solution of (2.5), then  defined by

defined by

is an  -periodic solution of (2.1). Hence, for the neural network (2.1), finding the periodic solutions is equivalent to finding solutions of (2.5).

-periodic solution of (2.1). Hence, for the neural network (2.1), finding the periodic solutions is equivalent to finding solutions of (2.5).

Definition 2.2.

The periodic solution  with initial value

with initial value  of the neural network (2.1) is said to be globally asymptotically stable if

of the neural network (2.1) is said to be globally asymptotically stable if  is stable and for any solution

is stable and for any solution  , whose existence interval is

, whose existence interval is  , we have

, we have  .

.

Lemma 2.3.

If  is a Banach space,

is a Banach space,  is nonempty closed convex with

is nonempty closed convex with  and

and  is an upper semicontinuous set-valued map which maps bounded sets into relatively compact sets, then one of the following statements is true:

is an upper semicontinuous set-valued map which maps bounded sets into relatively compact sets, then one of the following statements is true:

-

(a)

the set

is unbounded;

is unbounded; -

(b)

the

has a fixed point in

has a fixed point in  , that is, there exists

, that is, there exists  such that

such that  .

.

Lemma 2.3 is said to be the Leray-Schauder alternative theorem, whose proof can be found in [20]. Define the following:

then  is a class of norms of

is a class of norms of  ,

,  , and

, and  are Banach space under the norm

are Banach space under the norm  .

.

If  is (i) regular in

is (i) regular in  [16]; (ii) positive definite, that is,

[16]; (ii) positive definite, that is,  for

for  , and

, and  ; (iii) radially unbounded, that is,

; (iii) radially unbounded, that is,  as

as  , then

, then  is said to be C-regular.

is said to be C-regular.

Lemma 2.4 (Chain Rule [15]).

If  is C-regular and

is C-regular and  is absolutely continuous on any compact interval of

is absolutely continuous on any compact interval of  , then

, then  and

and  are differential for a.e.

are differential for a.e.  , and one has

, and one has

3. Existence of Periodic Solution

Theorem 3.1.

If the assumptions  and

and  hold, then for any

hold, then for any  , (2.1) has at least a solution defined on

, (2.1) has at least a solution defined on  with the initial value

with the initial value  .

.

Proof.

By the assumption  , it is easy to get that

, it is easy to get that  :

:  is an upper semicontinuous set-valued map with nonempty, compact, and convex values. Hence, by Definition 2.1, the local existence of a solution

is an upper semicontinuous set-valued map with nonempty, compact, and convex values. Hence, by Definition 2.1, the local existence of a solution  for (2.1) on

for (2.1) on  ,

,  , with

, with  , is obvious [17].

, is obvious [17].

Set  . Since

. Since  is a continuous

is a continuous  -periodic vector function,

-periodic vector function,  is bounded, that is, there exists a constant

is bounded, that is, there exists a constant  such that

such that  ,

,  . By the assumption

. By the assumption  , we have

, we have

By  , we can choose a constant

, we can choose a constant  , such that when

, such that when  ,

,

By (2.4), (3.1), (3.2), and the Cauchy inequality, when  ,

,

Therefore, let  , then, by (3.3), it follows that

, then, by (3.3), it follows that  on

on  . This means that the local solution

. This means that the local solution  is bounded. Thus, (2.1) has at least a solution with the initial value

is bounded. Thus, (2.1) has at least a solution with the initial value  on

on  . This completes the proof.

. This completes the proof.

Theorem 3.1 shows the existence of solutions of (2.1). In the following, we will prove that (2.1) has an  -periodic solution.

-periodic solution.

Let  for all

for all  , then

, then  is a linear operator.

is a linear operator.

Proposition 3.2.

is bounded, one to one and surjective.

is bounded, one to one and surjective.

Proof.

For any  , we have

, we have

this implies that  is bounded.

is bounded.

Let  . If

. If  , then

, then

By the assumption  ,

,

Noting  , we have

, we have

By (3.6),

Hence  . It follows

. It follows  . This shows that

. This shows that  is one to one.

is one to one.

Let  . In order to verify that

. In order to verify that  is surjective, in the following, we will prove that there exists

is surjective, in the following, we will prove that there exists  such that

such that

that is, we will prove that there exists a solution for the differential equation

Consider Cauchy problem

It is easily checked that

is the solution of (3.11). By (3.12), we want  , then

, then

that is,

By the assumption  ,

,  is a nonsingular matrix, where

is a nonsingular matrix, where  is a unit matrix. Thus, by (3.14), if we take

is a unit matrix. Thus, by (3.14), if we take  as

as

in (3.12), then (3.12) is the solution of (3.10). This shows that  is surjective. This completes the proof.

is surjective. This completes the proof.

By the Banach inverse operator theorem,  is a bounded linear operator.

is a bounded linear operator.

For any  , define the set-valued map

, define the set-valued map  as

as

Then  has the following properties.

has the following properties.

Proposition 3.3.

has nonempty closed convex values in

has nonempty closed convex values in  and is also upper semicontinuous from

and is also upper semicontinuous from  into

into  endowed with the weak topology.

endowed with the weak topology.

Proof.

The closedness and convexity of values of  are clear. Next, we verify the nonemptiness. In fact, for any

are clear. Next, we verify the nonemptiness. In fact, for any  , there exists a sequence of step functions

, there exists a sequence of step functions  such that

such that  and

and  a.e. on

a.e. on  . By the assumption

. By the assumption  (1) and the continuity of

(1) and the continuity of  , we can get that

, we can get that  is graph measurable. Hence, for any

is graph measurable. Hence, for any  ,

,  admits a measurable selector

admits a measurable selector  . By the assumption

. By the assumption  (2),

(2),  is uniformly integrable. So by Dunford-Pettis theorem, there exists a subsequence

is uniformly integrable. So by Dunford-Pettis theorem, there exists a subsequence  such that

such that  weakly in

weakly in  . Hence, from [21, Theorem 3.1], we have

. Hence, from [21, Theorem 3.1], we have

Noting that  is an upper semicontinuous set-valued map with nonempty closed convex values on

is an upper semicontinuous set-valued map with nonempty closed convex values on  for a.e.

for a.e.  ,

,  . Therefore,

. Therefore,  . This shows that

. This shows that  is nonempty.

is nonempty.

At last we will prove that  is upper semicontinuous from

is upper semicontinuous from  into

into  . Let

. Let  be a nonempty and weakly closed subset of

be a nonempty and weakly closed subset of  , then we need only to prove that the set

, then we need only to prove that the set

is closed. Let  and assume

and assume  in

in  , then there exists a subsequence

, then there exists a subsequence  such that

such that  a.e. on

a.e. on  . Take

. Take  ,

,  , then By the assumption

, then By the assumption  (2) and Dunford-Pettis theorem, there exists a subsequence

(2) and Dunford-Pettis theorem, there exists a subsequence  such that

such that  weakly in

weakly in  . As before we have

. As before we have

This implies  , that is,

, that is,  is closed in

is closed in  . The proof is complete.

. The proof is complete.

Theorem 3.4.

Under the assumptions  and

and  , there exists a solution for the boundary-value problem (2.5), that is, the neural network (2.1) has an

, there exists a solution for the boundary-value problem (2.5), that is, the neural network (2.1) has an  -periodic solution.

-periodic solution.

Proof.

Consider the set-valued map  . Since

. Since  is continuous and

is continuous and  is upper semicontinuous, the set-valued map

is upper semicontinuous, the set-valued map  is upper semicontinuous. Let

is upper semicontinuous. Let  be a bounded set, then

be a bounded set, then

is a bounded subset of  . Since

. Since  is a bounded linear operator,

is a bounded linear operator,  is a bounded subset of

is a bounded subset of  . Noting that

. Noting that  is compactly embedded in

is compactly embedded in  ,

,  is a relatively compact subset of

is a relatively compact subset of  . Hence by Proposition 3.3,

. Hence by Proposition 3.3,  is the upper semicontinuous set-valued map which maps bounded sets into relatively compact sets.

is the upper semicontinuous set-valued map which maps bounded sets into relatively compact sets.

For any  , when

, when  , by (3.1) and the Cauchy inequality,

, by (3.1) and the Cauchy inequality,

Arguing as (3.2), we can choose a constant  , such that when

, such that when  ,

,

Therefore, when  , by (3.21),

, by (3.21),

Set

In the following, we will prove that  is a bounded subset of

is a bounded subset of  . Let

. Let  , then

, then  , that is,

, that is,  . By the definition of

. By the definition of  , there exists a measurable selection

, there exists a measurable selection  , such that

, such that

By (3.23) and (3.25),  . Otherwise,

. Otherwise,  . By

. By  , we have

, we have  . Since

. Since  is continuous, we can choose

is continuous, we can choose  , such that

, such that

Thus, there exists a constant  , such that when

, such that when  ,

,  . By (3.23) and (3.25),

. By (3.23) and (3.25),

This is a contradiction. Thus, for any  . Furthermore, we have

. Furthermore, we have

This shows that  is a bounded subset of

is a bounded subset of  .

.

By Lemma 2.3, the set-valued map  has a fixed point, that is, there exists

has a fixed point, that is, there exists  such that

such that  ,

,  . Hence there exists a measurable selection

. Hence there exists a measurable selection  , such that

, such that

By the definition of  ,

,  . Moreover, by Definition 2.1 and (3.29),

. Moreover, by Definition 2.1 and (3.29),  is a solution of the boundary-value problem (2.5), that is, the neural network (2.1) has an

is a solution of the boundary-value problem (2.5), that is, the neural network (2.1) has an  -periodic solution. The proof is completed.

-periodic solution. The proof is completed.

4. Global Asymptotical Stability of Periodic Solution

Theorem 4.1.

Suppose that  and the following assumptions are satisfied.

and the following assumptions are satisfied.

: for each

: for each  , there exists a constant

, there exists a constant  , such that for all two different numbers

, such that for all two different numbers  , for all

, for all  and for all

and for all

:

:  is a diagonal matrix, and there exists a positive diagonal matrix

is a diagonal matrix, and there exists a positive diagonal matrix  such that

such that  and

and

where  is the minimum eigenvalues of symmetric matrix

is the minimum eigenvalues of symmetric matrix  ,

,  , for all

, for all  . Then the neural network (2.1) has a unique

. Then the neural network (2.1) has a unique  -periodic solution which is globally asymptotically stable .

-periodic solution which is globally asymptotically stable .

Proof.

By the assumptions  and

and  , there exists a positive constant

, there exists a positive constant  such that

such that

for all  ,

,  , and

, and

In fact, from (4.2), we have

Choose  , then

, then  , which implies that (4.3) holds from (4.1), and (4.4) is also satisfied. By the assumption

, which implies that (4.3) holds from (4.1), and (4.4) is also satisfied. By the assumption  , it is easy to get that the assumption

, it is easy to get that the assumption  is satisfied. By Theorem 3.4, the neural network (2.1) has an

is satisfied. By Theorem 3.4, the neural network (2.1) has an  -periodic solution. Let

-periodic solution. Let  be an

be an  -periodic solution of the neural network (2.1). Consider the change of variables

-periodic solution of the neural network (2.1). Consider the change of variables  , which transforms (2.4) into the differential equation

, which transforms (2.4) into the differential equation

where  ,

,  , and

, and  . Obviously,

. Obviously,  is a solution of (4.6).

is a solution of (4.6).

Consider the following Lyapunov function:

By (4.3),

and thus  . In addition, it is easy to check that

. In addition, it is easy to check that  is regular in

is regular in  and

and  . This implies that

. This implies that  is C-regular. Calculate the derivative of

is C-regular. Calculate the derivative of  along the solution

along the solution  of (4.6). By Lemma 2.4, (4.3), and (4.4),

of (4.6). By Lemma 2.4, (4.3), and (4.4),

where  . Thus, the solution

. Thus, the solution  of (4.6) is globally asymptotically stable, so is the periodic solution

of (4.6) is globally asymptotically stable, so is the periodic solution  of the neural network (2.1). Consequently, the periodic solution

of the neural network (2.1). Consequently, the periodic solution  is unique. The proof is completed.

is unique. The proof is completed.

Remark 4.2.

-

(1)

If

is nondecreasing, then the assumption

is nondecreasing, then the assumption  obviously holds. Thus the assumption

obviously holds. Thus the assumption  is more general.

is more general. -

(2)

In [14], Forti et al. considered delayed neural networks modelled by the differential equation

(410)

(410)

where  is a positive diagonal matrix, and

is a positive diagonal matrix, and  is an

is an  constant matrix which represents the delayed neuron interconnection. When

constant matrix which represents the delayed neuron interconnection. When  satisfies the assumption

satisfies the assumption  (1) and is nondecreasing and bounded, [14] investigated the existence and global exponential stability of the equilibrium point, and global convergence in finite time for the neural network (4.10). At last, Forti conjectured that the neural network

(1) and is nondecreasing and bounded, [14] investigated the existence and global exponential stability of the equilibrium point, and global convergence in finite time for the neural network (4.10). At last, Forti conjectured that the neural network

has a unique periodic solution and all solutions converge to the asymptotically stable limit cycle when  is a periodic function. When

is a periodic function. When  , the neural network (4.11) changes as the neural network (2.1) without delays. Thus, without assumptions of the boundedness and the monotonicity of the activation functions, Theorem 4.1 obtained in this paper shows that Forti's conjecture for discontinuous neural networks with nonlinear growth activations and without delays is true.

, the neural network (4.11) changes as the neural network (2.1) without delays. Thus, without assumptions of the boundedness and the monotonicity of the activation functions, Theorem 4.1 obtained in this paper shows that Forti's conjecture for discontinuous neural networks with nonlinear growth activations and without delays is true.

5. Illustrative Example

Example 5.1.

Consider the three-dimensional neural network (2.1) defined by  ,

,

It is easy to see that  is discontinuous, unbounded, and nonmonotonic and satisfies the assumptions

is discontinuous, unbounded, and nonmonotonic and satisfies the assumptions  and

and  .

.  ,

,  . Take

. Take  and

and  , then

, then  , and we have

, and we have

All the assumptions of Theorem 4.1 hold and the neural network in Example has a unique  -periodic solution which is globally asymptotically stable.

-periodic solution which is globally asymptotically stable.

Figures 1 and 2 show the state trajectory of this neural network with random initial value  . It can be seen that this trajectory converges to the unique periodic solution of this neural network. This is in accordance with the conclusion of Theorem 4.1.

. It can be seen that this trajectory converges to the unique periodic solution of this neural network. This is in accordance with the conclusion of Theorem 4.1.

References

Di Marco M, Forti M, Tesi A: Existence and characterization of limit cycles in nearly symmetric neural networks. IEEE Transactions on Circuits and Systems I 2002,49(7):979-992. 10.1109/TCSI.2002.800481

Chen B, Wang J: Global exponential periodicity and global exponential stability of a class of recurrent neural networks. Physics Letters A 2004,329(1-2):36-48. 10.1016/j.physleta.2004.06.072

Cao J: New results concerning exponential stability and periodic solutions of delayed cellular neural networks. Physics Letters A 2003,307(2-3):136-147. 10.1016/S0375-9601(02)01720-6

Liu Z, Chen A, Cao J, Huang L: Existence and global exponential stability of periodic solution for BAM neural networks with periodic coefficients and time-varying delays. IEEE Transactions on Circuits and Systems I 2003,50(9):1162-1173. 10.1109/TCSI.2003.816306

Wu H, Li Y: Existence and stability of periodic solution for BAM neural networks with discontinuous neuron activations. Computers & Mathematics with Applications 2008,56(8):1981-1993. 10.1016/j.camwa.2008.04.027

Wu H, Xue X, Zhong X: Stability analysis for neural networks with discontinuous neuron activations and impulses. International Journal of Innovative Computing, Information and Control 2007,3(6B):1537-1548.

Wu H: Stability analysis for periodic solution of neural networks with discontinuous neuron activations. Nonlinear Analysis: Real World Applications 2009,10(3):1717-1729. 10.1016/j.nonrwa.2008.02.024

Wu H, San C: Stability analysis for periodic solution of BAM neural networks with discontinuous neuron activations and impulses. Applied Mathematical Modelling 2009,33(6):2564-2574. 10.1016/j.apm.2008.07.022

Papini D, Taddei V: Global exponential stability of the periodic solution of a delayed neural network with discontinuous activations. Physics Letters A 2005,343(1–3):117-128.

Wu H, Xue X: Stability analysis for neural networks with inverse Lipschitzian neuron activations and impulses. Applied Mathematical Modelling 2008,32(11):2347-2359. 10.1016/j.apm.2007.09.002

Wu H, Sun J, Zhong X: Analysis of dynamical behaviors for delayed neural networks with inverse Lipschitz neuron activations and impulses. International Journal of Innovative Computing, Information and Control 2008,4(3):705-715.

Wu H: Global exponential stability of Hopfield neural networks with delays and inverse Lipschitz neuron activations. Nonlinear Analysis: Real World Applications 2009,10(4):2297-2306. 10.1016/j.nonrwa.2008.04.016

Forti M, Nistri P: Global convergence of neural networks with discontinuous neuron activations. IEEE Transactions on Circuits and Systems I 2003,50(11):1421-1435. 10.1109/TCSI.2003.818614

Forti M, Nistri P, Papini D: Global exponential stability and global convergence in finite time of delayed neural networks with infinite gain. IEEE Transactions on Neural Networks 2005,16(6):1449-1463. 10.1109/TNN.2005.852862

Forti M, Grazzini M, Nistri P, Pancioni L: Generalized Lyapunov approach for convergence of neural networks with discontinuous or non-Lipschitz activations. Physica D 2006,214(1):88-99. 10.1016/j.physd.2005.12.006

Clarke FH: Optimization and Nonsmooth Analysis, Canadian Mathematical Society Series of Monographs and Advanced Texts. John Wiley & Sons, New York, NY, USA; 1983:xiii+308.

Filippov AF: Differential Equations with Discontinuous Right-Hand Side, Mathematics and Its Applications (Soviet Series). Kluwer Academic Publishers, Boston, Mass, USA; 1984.

Aubin J-P, Cellina A: Differential Inclusions: Set-Valued Maps and Viability Theory, Grundlehren der Mathematischen Wissenschaften. Volume 264. Springer, Berlin, Germany; 1984:xiii+342.

Aubin J-P, Frankowska H: Set-Valued Analysis, Systems and Control: Foundations and Applications. Volume 2. Birkhäuser, Boston, Mass, USA; 1990:xx+461.

Dugundji J, Granas A: Fixed Point Theory. Vol. I, Monografie Matematyczne, 61. Polish Scientific, Warsaw, Poland; 1982.

Papageorgiou NS: Convergence theorems for Banach space valued integrable multifunctions. International Journal of Mathematics and Mathematical Sciences 1987,10(3):433-442. 10.1155/S0161171287000516

Acknowledgments

The authors are extremely grateful to anonymous reviewers for their valuable comments and suggestions, which help to enrich the content and improve the presentation of this paper. This work is supported by the National Science Foundation of China (60772079) and the National 863 Plans Projects (2006AA04z212).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Li, Y., Wu, H. Global Stability Analysis for Periodic Solution in Discontinuous Neural Networks with Nonlinear Growth Activations. Adv Differ Equ 2009, 798685 (2009). https://doi.org/10.1155/2009/798685

Received:

Accepted:

Published:

DOI: https://doi.org/10.1155/2009/798685

is unbounded;

is unbounded; has a fixed point in

has a fixed point in  , that is, there exists

, that is, there exists  such that

such that  .

. is nondecreasing, then the assumption

is nondecreasing, then the assumption  obviously holds. Thus the assumption

obviously holds. Thus the assumption  is more general.

is more general.

, and

, and  .

.

, and

, and  .

.